Logistic regression is a basic machine learning model for binary classification, so do not misunderstand that linear model could be apply to multi-class classification.

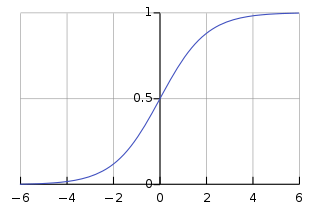

Assume that you know the famous sigmoid function:

the bias term of $b$ will be ignore for convenience. Yes, the sigmoid function is look like this,

it squash whole real number field to the range $(0, 1)$, as a probability for a certain class.

Let’s look back to $w cdot x$, w is a vector or matrix, it’s not important, the important is the out put of the dot product will be a scalar for sigmoid function.

From another view, we could regard the aforementioned $w$ as $Delta w = w_1 - w_0$.

Then we can get $Delta w cdot x = w_1 cdot x - w_0 cdot x$, where $w_1$ is the weights vector for class=1 and same meaning for $w_0$.

And $w_1 cdot x$, $w_0 cdot x$ could be seen as the value of confidence that the input is class1 and class2.

If $w_1 cdot x > w_0 cdot x$, we will get $w cdot x > 0$ and the corresponding output of sigmoid function will greater than 0.5, which means that the input $x$ is more likely to be calss1, and vice versa.

Sigmoid

Now, we can put the $Delta x$ back to the function:

divide both the top and the bottom by $e^{-w_0cdot x}$:

that means the probability of class1 could get from the ratio of confidence values that class1 to class1 plus class2 (all these two classes).

The sigmoid function can only show the ratio (probability) of class1 of the two.

But if we expand it for more than 2 classes, can we compute the ratio of a certain class to all calssed by this formula.

Softmax

Assume we have $K$ classes, their weights are $w_1, w_2, …, w_k$, we use $e^{w_i cdot x}$ represent the confident value of class i, imitate the form of above formula, we can get the ratio:

Add sum formula $Sigma$ to the denominator, then we will get the well-known Softmax function !

Binary classification

We all know that sigmoid is for binary classification while softmax is for multi-class classification.

But what is the difference between sigmoid and softmax when they both are applied to binary classification?

- First, sigmoid output only one value, while softmax will output a vector of two value for two classes.

近期评论