history:

- Origins: Algorithms that try to mimic the brain

- It was widely used in 80s and early 90s

- Recent resurgence:State-of-the-art technique for many applications

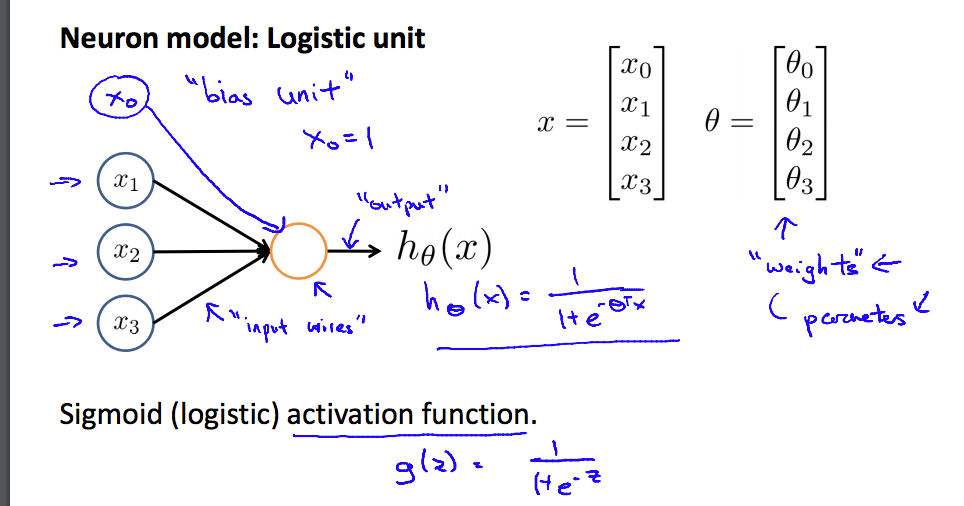

Neuron Model:

logistic unit

-

sigmoid(logistic) activation function

-

x is the in put and h /theta (x) is the output

-

/theta is the “weights”

-

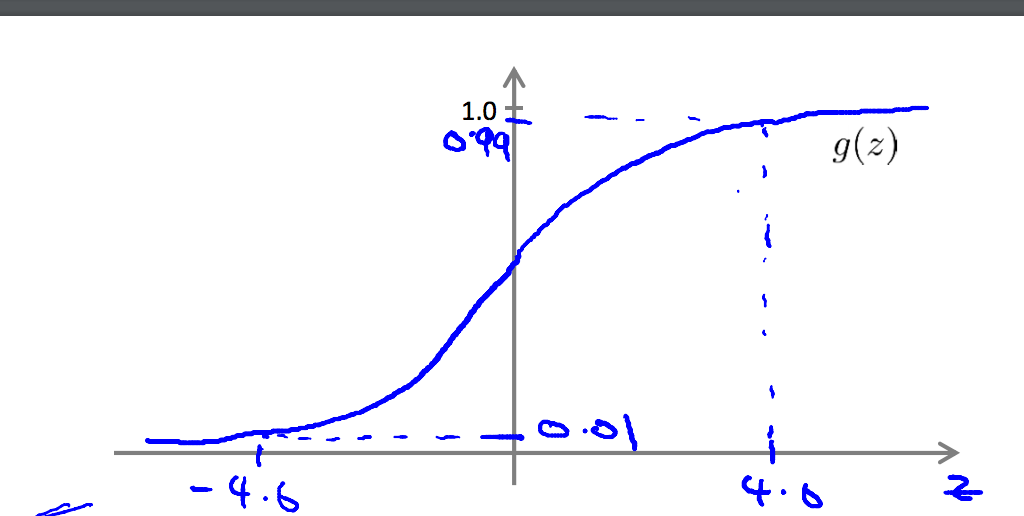

g(z) ‘s functional image is as follows:

-

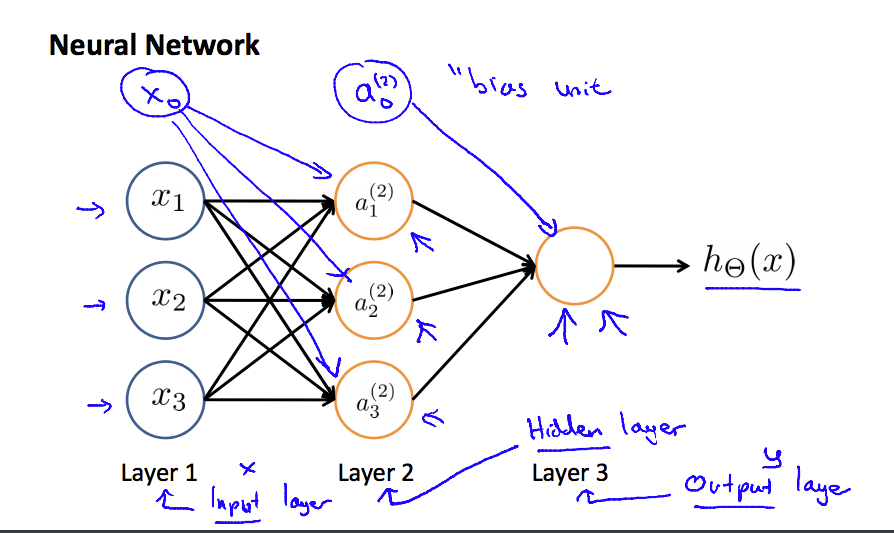

X0 and X0(2) is the bios unit.

-

Every layers expect input layer and output layer are hidden layer.

-

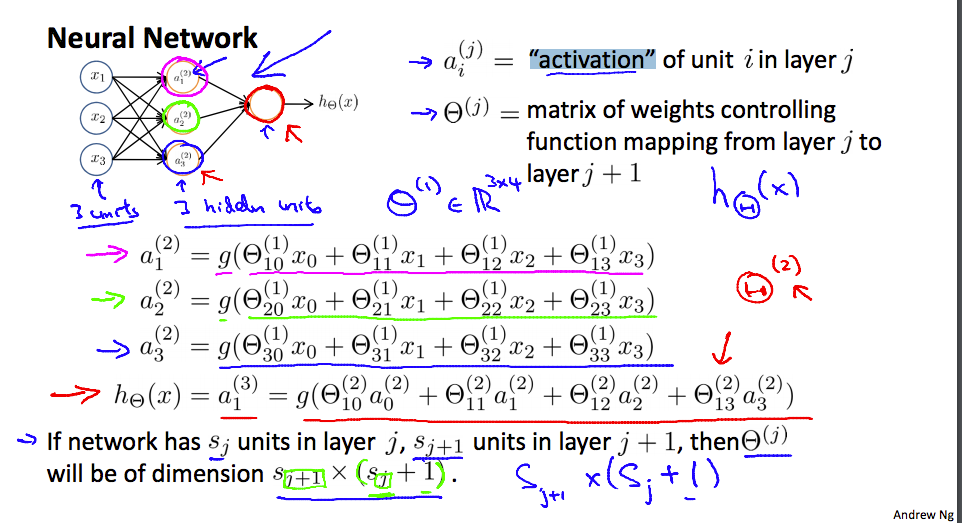

Pay attention to the expression. Pay extra special attention to the meaning of ai(j) and theta (j)

-

theta (j) will be of dimension s(j+1)*(sj + 1).

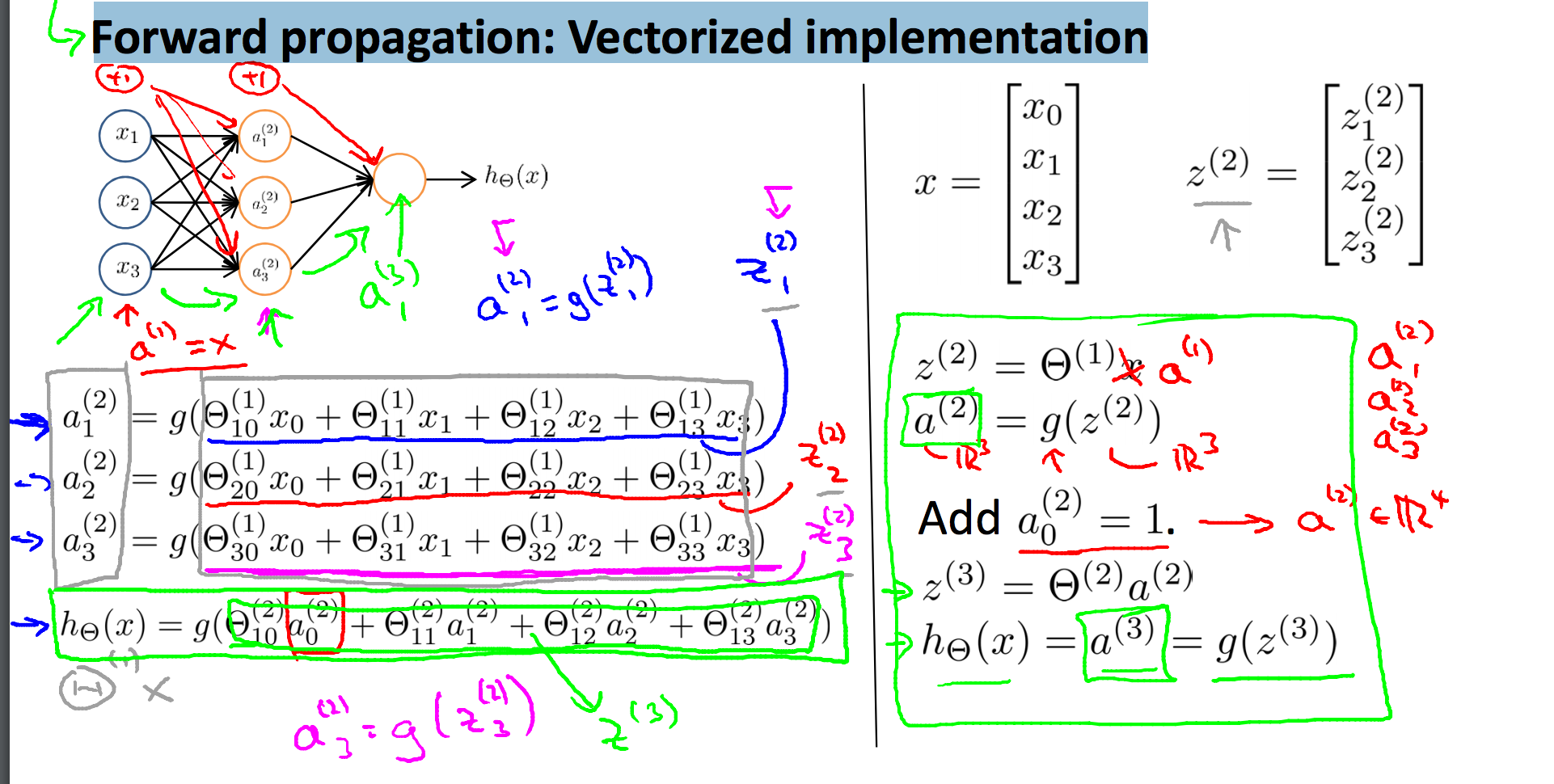

Forward propagation: Vectorized implementation

- a^(1) = x

- We have to add a0 before calculation of z(a) in every layers

- Let’s look at the picture shown above as an example. a(1) = x = 4 1 . theta (1) = 3 4 . z(2) = 31 . a(2) = 3 1;

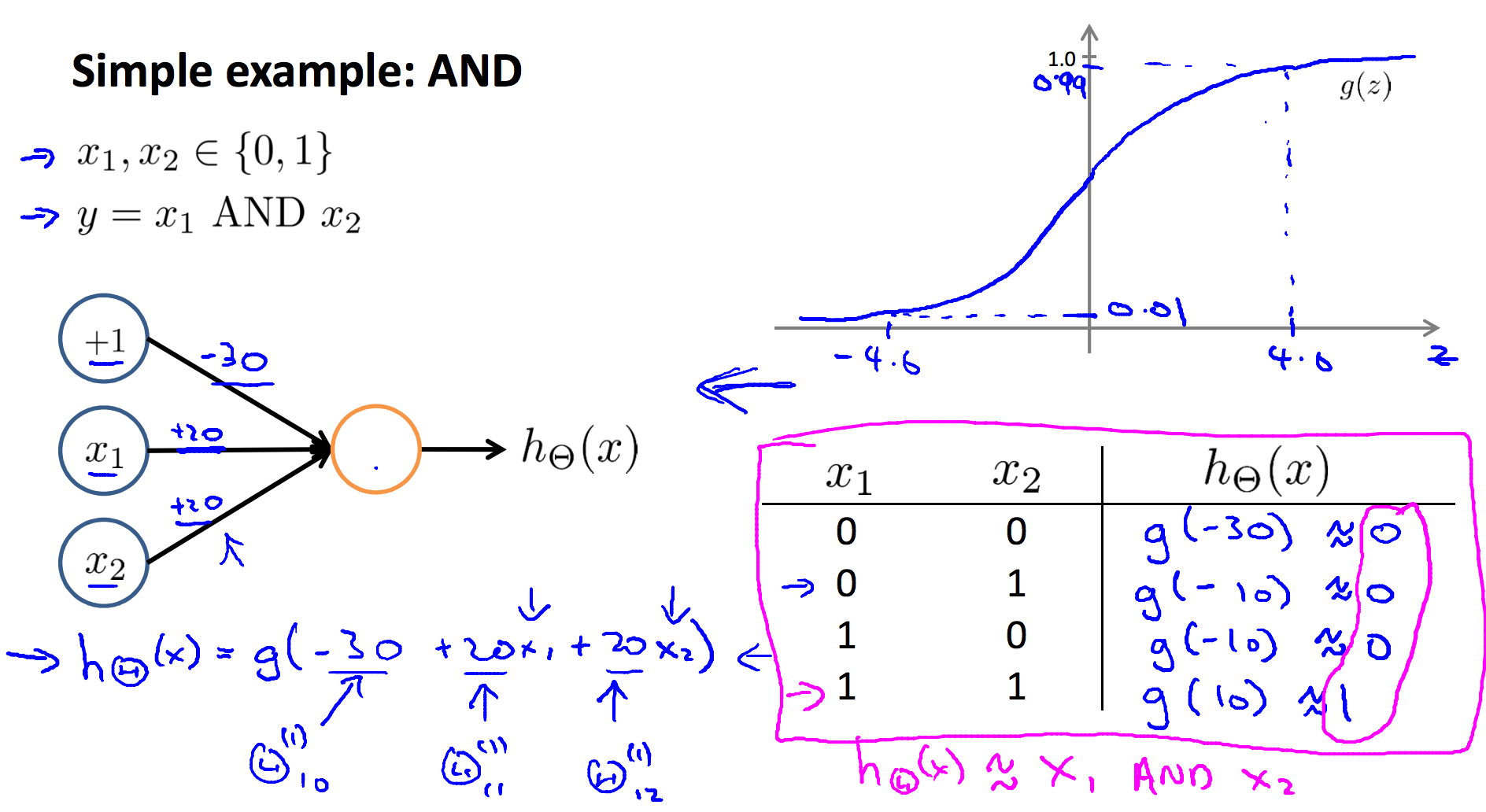

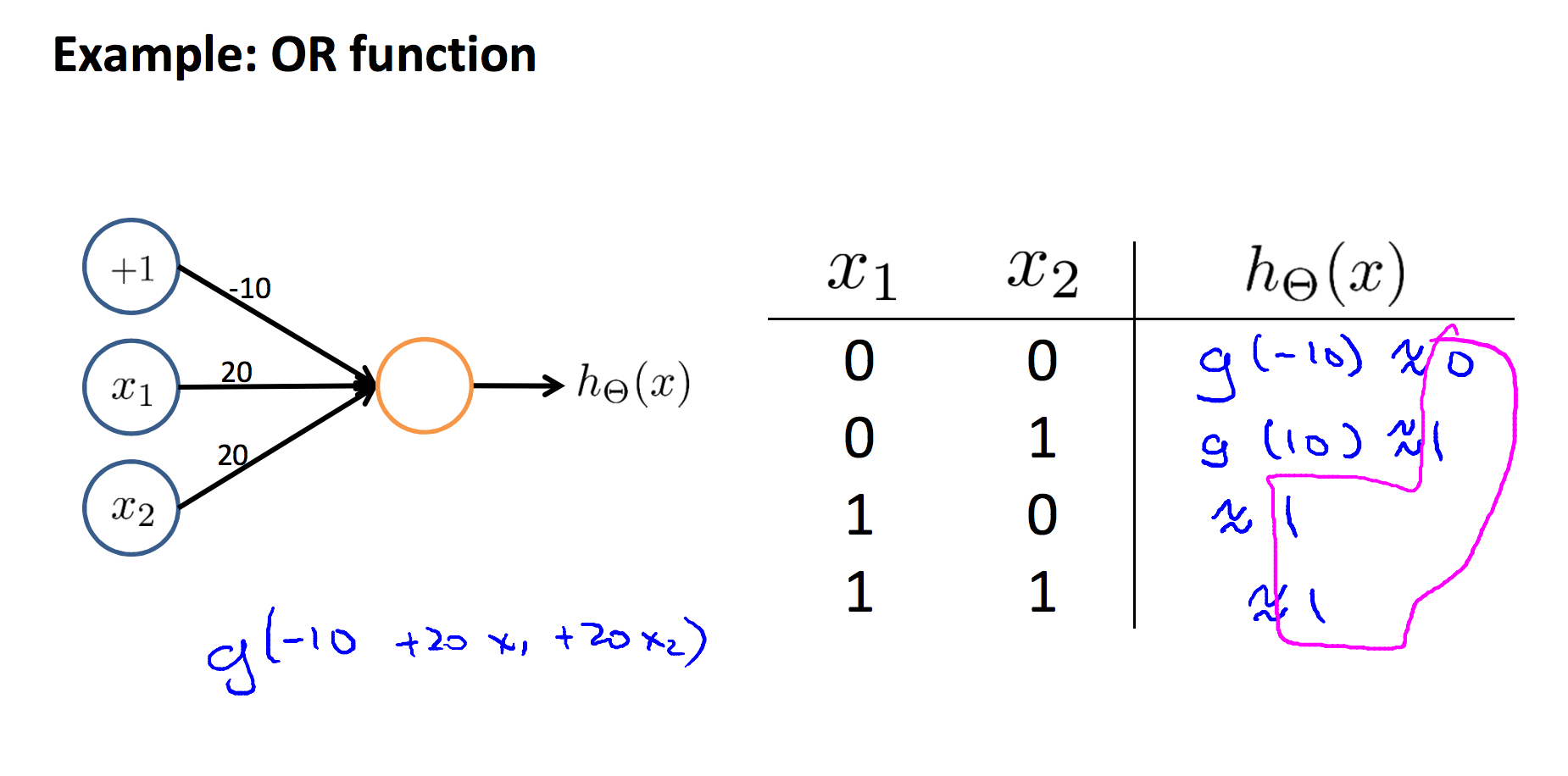

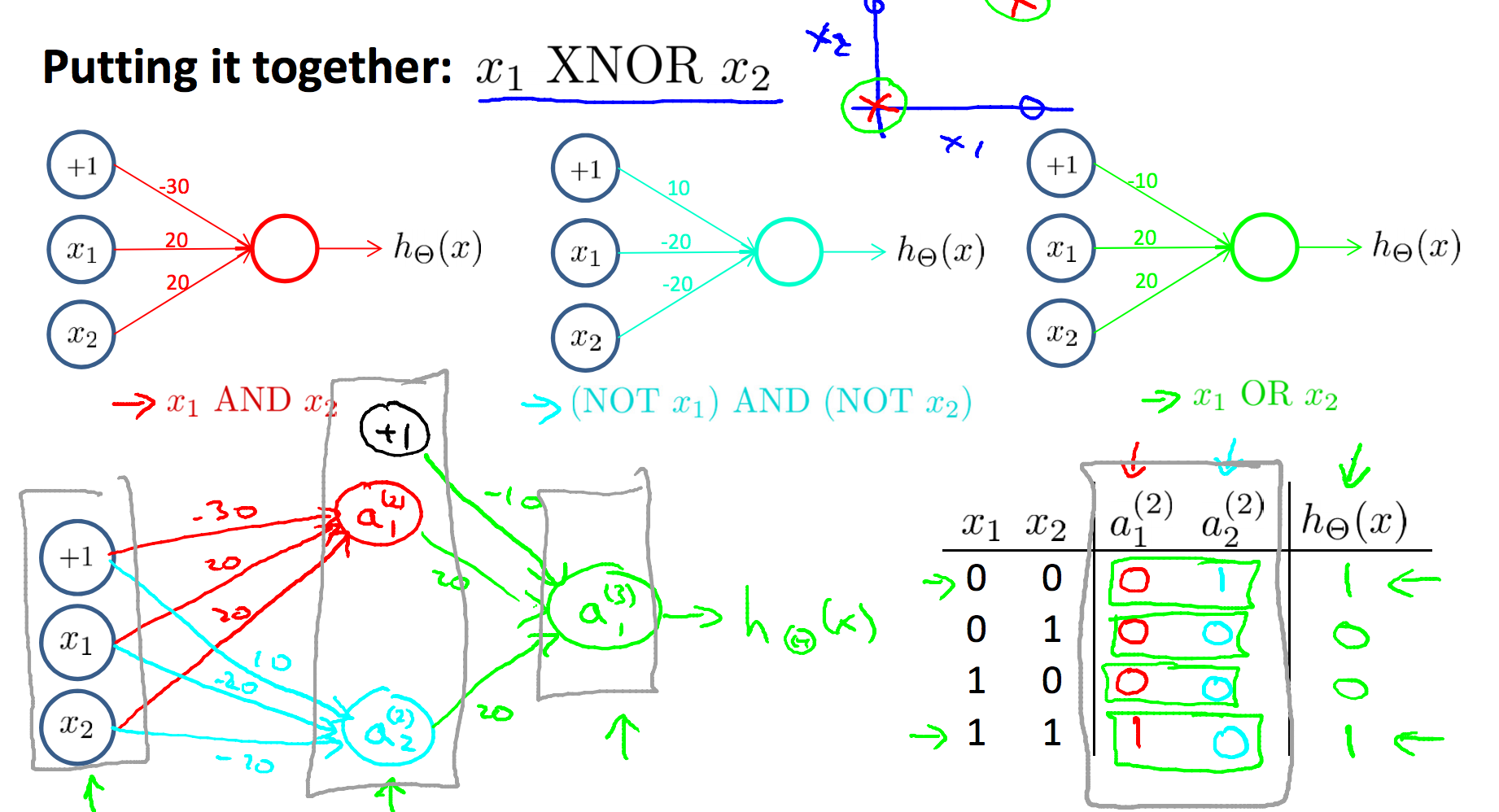

Examples and intuitions

- theta determines the function

- Pay attention to the g(z)

- We can conbinge some units togethor to build more complex problems

近期评论